The European Testing Conference 2018 took place in Amsterdam. For me it was the first time to attend this conference, but what can I say, it was an amazing experience. The organizers made the attendees feel as comfortable as possible and were nearby all the time. Everyone contributed to the experience as there were some sessions that enabled networking and lived from the participation of everyone, like the SpeedMeet or the Open Space. I think this it what is one of the most important parts of a conference – to get to know people, help each other and to share learnings and experience. So including networking into the schedule makes the EuroTestConf special.

The keynotes and talks were really great as well and you could also see that the organizers put a lot of effort into finding the right speakers and the most interesting topics. The speakers shared a lot of insights and gave very practical examples. The workshops were another highlight. In total there were five workshops, which have taken place two times, so that you got to choose two of them to attend. Normally I am used to one or more days of workshops prior to the conference days, so this time it was a surprise, that each attendee could participate at two workshops.

But enough of that, let’s come to my summaries of the sessions I attended:

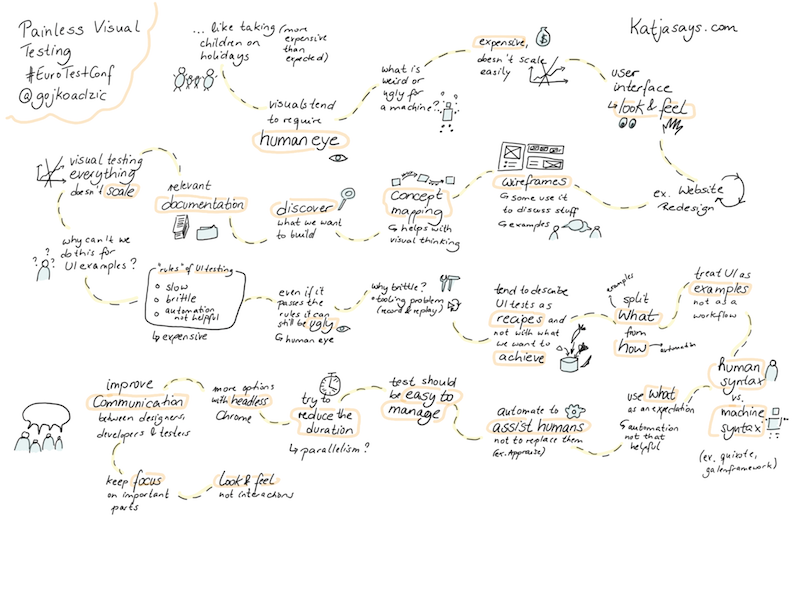

Painless visual testing

Gojko Adzic

Visual testing is not as easy as it might seem, because it tends to require the human eye. How can a machine know that something, that technically works, is still weird or ugly for somebody who looks at it!? This means you have to include the look and feel into testing visuals, which makes it expensive and not so easy scalable. What might help is discussing wireframes or some concept mapping. You should try to think about what you want to build and write down some relevant documentation. Try to test the most important stuff, because visually testing everything simply won’t scale. Try to move from describing UI tests like a recipe to describing what you want to achieve with them. Therefore the what and the how have to be separated. Automation can try to assist the humans with visual testing, but it cannot replace them. If you automate parts of it, try to keep the tests as short as possible and easy to manage.

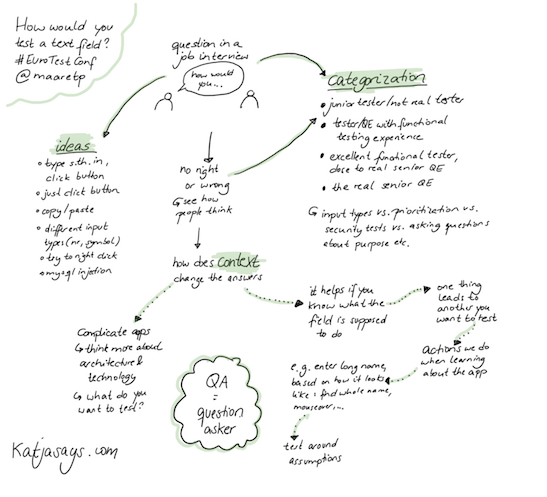

How to test a text field

Maaret Pyhäjärvi

When testing a text field there are a lot of test cases that come to mind. Some examples are:

- type something in, click the submit button

- just click the button

- copy/paste into the text field

- use different input types (numbers, symbols)

- try to right-click

- enter an SQL expression

When using this question in a job interview, Maaret wanted to see, how the applicant thinks and what ideas come to mind. With that information she could categorize the applicant as a junior tester, tester with some functional testing experience, excellent senior tester or real senior QE.

When thinking about how to test a text field the context of it can change the answers a lot. Then you know more about what it is supposed to do and one thing leads to another thing you want to test. It’s all about the actions you do when learning about the app that uses the text field.

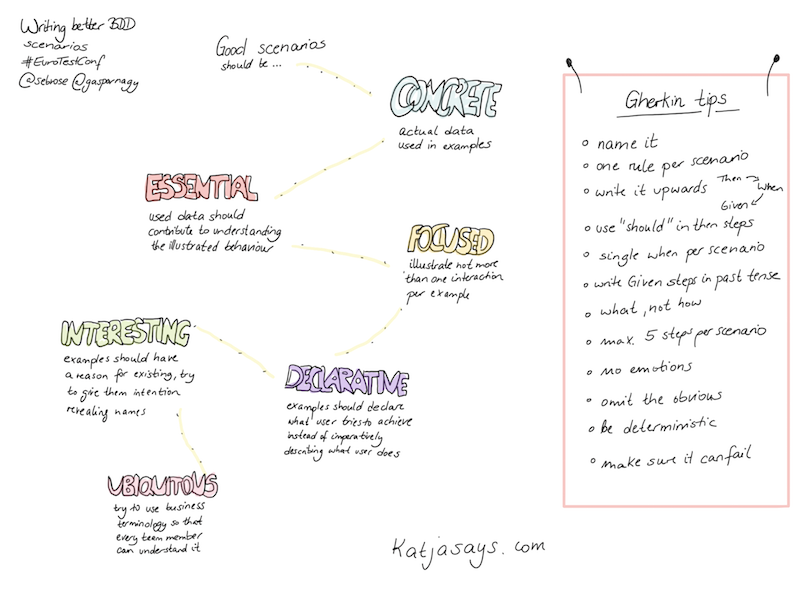

Writing better BDD scenarios

Seb Rose & Gáspár Nagy

This workshop was all about how to improve BDD scenarios. In some group work we tried to figure out what to improve in given scenarios or how to write one on our own. Good scenarios should be…

- CONCRETE: actual data used in examples

- ESSENTIAL: used data should contribute to understanding the illustrated behaviour

- FOCUSED: illustrate not more than one action per example

- INTERESTING: examples should have a reason for existing, try to give them intention-revealing names

- DECLARATIVE: examples should declare what user tries to achieve instead of imperatively describing what user does

- UBIQUITOUS: try to use business terminology so that every team member can understand it

So you can say that the Gherkin tips are to name the scenario and to just use one example for it. A good approach is to write it upwards (start with then, end with given), use „should“ in the steps and to avoid entering emotions (like: the user wants to). The given step should be written in past tense and you should try to keep the scenario down to 5 steps. Probably the most important thing is to make sure that the scenario can fail.

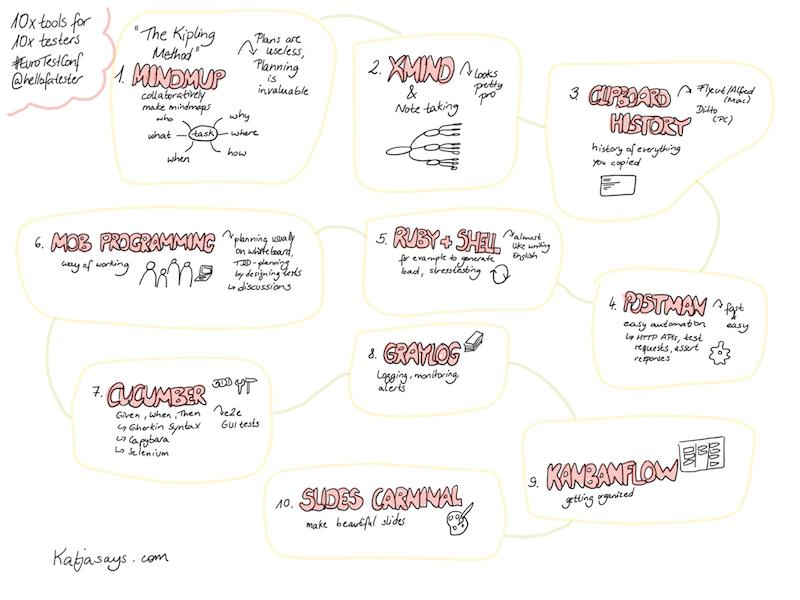

10x tools for 10x testers

Anssi Lehtelä

In this session, Anssi was talking about 10 (types of) tools/ways of working he likes to test and showed a short demo of each of them. The tips are:

- MindMup

- Xmind

- Clipboard history tools

- Postman

- Ruby + Shell

- Mob programming

- Cucumber

- Graylog

- KanbanFlow

- Slides Carnival

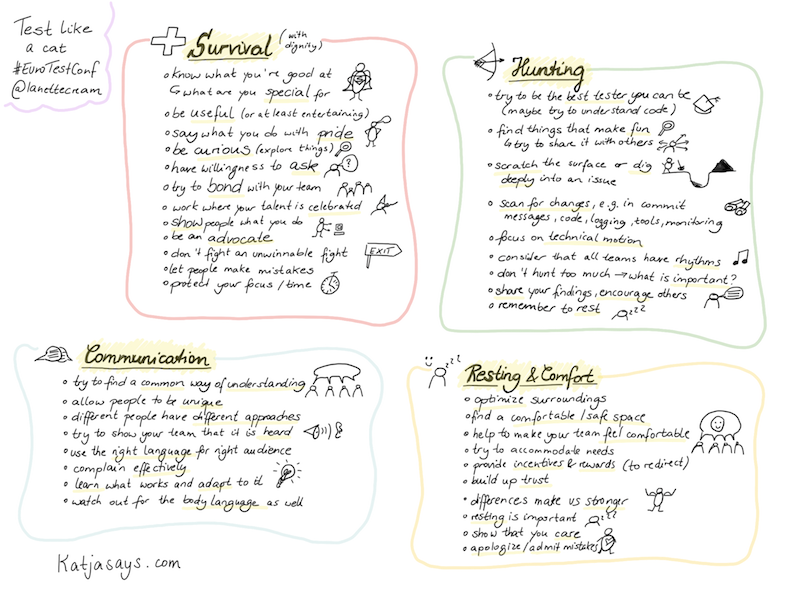

Test like a cat

Lanette Creamer

In the final keynote of day one Lanette was talking about how testers are similar to cats and what they can adapt from them for their daily worklife. She divided the talk in four sections: Survival, Hunting, Communication and Resting & Comfort.

Survival

If you want to survive with dignity, you should know what you’re good at and try to be as useful as possible. Talk about what you are doing with pride. Be curious and try to explore many things, but also don’t hesitate to ask questions. Show people what you do and be an advocate about it. Try to work where your work is celebrated and bond with your team. Let people make mistakes and don’t fight unwinnable fights. You are special, so protect your time.

Hunting

Hunt for new knowledge to be the best tester you can be. Find things that make fun and share them with others. Keep the balance between scratching the surface and digging deep into an issue. Focus on technical motions – search for changes in commits, logging or monitoring. Consider that all teams have their rhythms and don’t hunt too much, focus on the most important things. Share your findings and encourage others, but also don’t forget to rest.

Communication

Try to find a common understanding with your team. Allow people to be unique and to have their own approaches. Use the right language for the right audience and show your team that it is heard. If you have complaints, complain efficiently. Learn what works and adapt to it and also have a look at the body language.

Resting & Comfort

Optimize your surroundings and find a safe and comfortable space. Try to accomodate the needs of your team members and to make them feel comfortable. Build up trust, admit that you make mistakes and provide incentives and rewards. Differences make us stronger, so show that you care about everyone and their opinions. And don’t forget: resting is important.

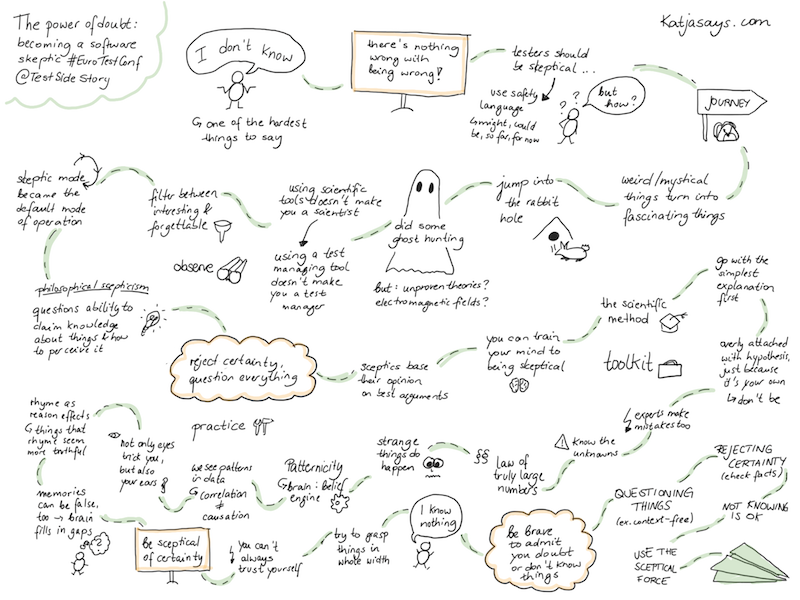

The power of doubt: becoming a software skeptic

Zeger Van Hese

„I don’t know“ is one of the hardest things to say, though there’s nothing wrong with being wrong. To be safe, you can use safety language like might, could be, so far, for now. As a tester it is a good thing to be skeptic and to ask questions about things.

In this keynote Zeger was talking about his journey of becoming a software skeptic. It all started with weird and mystical things that turned into fascinating things. He jumped into the rabbit hole and did some ghost hunting. But using scientific tools (for example to measure electromagnetic fields) doesn’t make you a scientist, like using a test managing tool doesn’t make you a test manager. Somehow the skeptic mode became his default mode of operation. His tip is to reject certainty and to question everything, because you cannot even be sure about and trust yourself. The mind plays us tricks all the time and sees things that aren’t there. We turn correlations into causation where there aren’t any. So try to grasp things in their whole and be brave to admit that you doubt or don’t know things.

Scripted vs. exploratory testing

Vernon Richards

Vernon showed us in this workshop that both scripted and exploratory testing have their pros and cons. We started with some scripted testing of a registration page, continued with exploratory test it and than focused on exploratory test for a specific purpose. We have seen that all of these ways of testing have something we like and some things that might be better. A summary of the learnings can be found in my sketchnote:

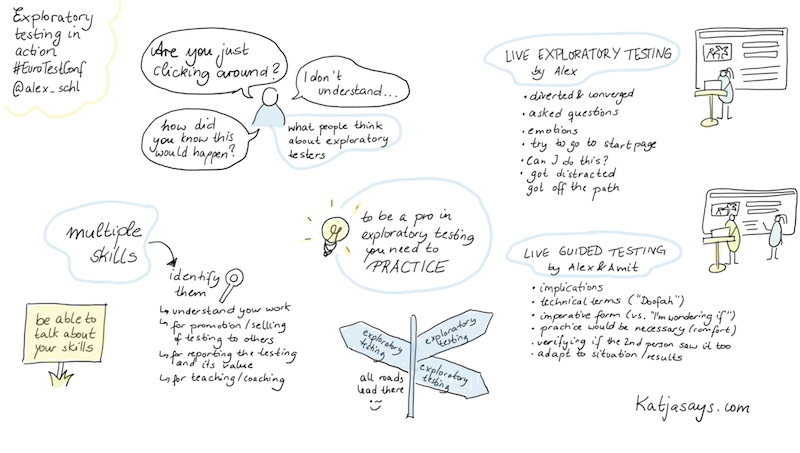

Exploratory testing in action

Alex Schladebeck

When you tell somebody that you are an exploratory tester you often get questions like „What are you doing? I don’t understand it.“, „Are you just clicking around?“ or „How did you know this would happen?“. But exploratory testing is something you need to practice and that requires multiple skills which you have to discover. She showed us exploratory testing in action in two different ways. First she was testing a website on her own and then she was guiding someone to test a website. That showed us that is not always easy to remain on the path and to not get distracted by things. It’s sometimes hard to ask the right question and to be objective about things and don’t judge things by personal opinions. When you are guiding someone, you should really adapt to the way your partner is testing things and to use a language that can be understood by everyone.

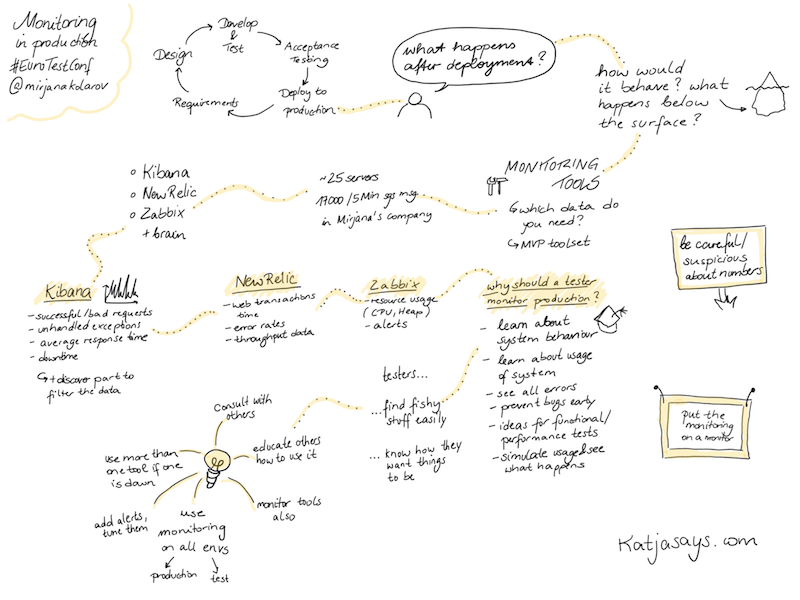

Monitoring in production

Mirjana Kolarov

The cycle of a product normally is:

- Define requirements

- Design the product

- Develop and test the product

- Do some acceptance testing

- Deploy to production

But what happens after the deployment? How would the product behave in production and what happens below the surface? To get to know more about this, you need some monitoring. Tools that Mirjana recommends are Kibana, New Relic and Zabbix. They monitor things like successful/bad requests, response times, error rates or resource usage and you can set some alerts. As a tester it is very useful to be included in the monitoring process as you can learn more about the behaviour and the usage of the system, see errors and prevent bugs early. If you are part of the monitoring (or even do it on your own), don’t forget to:

- consult with others

- educate others how to use it

- use more than one tool

- monitor the tool itself as well

- use monitoring on all environments

- add alerts and tune them

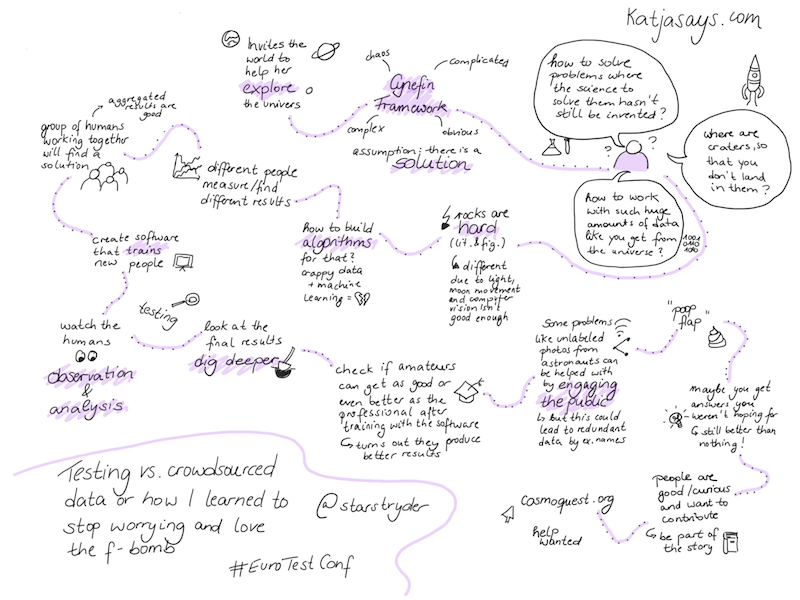

Testing vs. crowdsourced data or how I learned to stop worrying and love the f-bomb

Pamela Gay

In the final keynote of day two Pamela invited us to help her explore the universe. In her work life she has to deal with questions like: „How to solve problems where the science to solve them hasn’t still be invented?“, „How to work with such a huge amount of data like you get from the universe?“ or „Where are craters on planets so that you don’t land in them?“. Rocks are hard, both literally and figuratively. Depending on the movements of the moon or the lighting they are or look different and computer vision isn’t good enough for that. So how to build algorithms to detect rocks and craters, if you don’t have good enough data? While working with different people it was discovered, that people measure and find different results. If groups of people work together, the aggregated results are quite good. Therefore a software was developed, that trains people to discover different things, like for example detecting and measuring craters. Another way of getting to relevant data was to crowdsource the work. For example there are a lot of pictures that are made by astronauts which are missing important labels like the exact location. For this, the pictures are made available in public, so that the crowd can try to detect the locations. Although there is some redundant data and you get some answers you weren’t looking for, it is still better than not having any data at all.

All in all I enjoyed the conference really really much and want to thank the organizers for such an amazing event. I met a lot of people, had a lot of interesting talks and learned quite some things. I loved that you could just talk to anybody and that you had lots of opportunities to ask questions and to get ideas on how to solve some of your problems. I hope that you liked the conference (or at least my summary, if you haven’t been there) and am looking forward to comments.

[…] European Testing Conference 2018 #EuroTestConf – Katja Budnikov […]